Deploying a Postgres-Based Open Event Server to Kubernetes

In this post, I will walk you through deploying the Open Event Server on Kubernetes, hosted on Google Cloud Platform’s Compute Engine. You’ll be needing a Google account for this, so create one if you don’t have one.

First, I cd into the root of our project’s Git repository. Now I need to create a Dockerfile. I will use Docker to package our project into a nice image which can be then be “pushed” to Google Cloud Platform. A Dockerfile is essentially a text doc which simply contains the commands required to assemble an image. For more details on how to write one for your project specifically, check out Docker docs. For Open Event Server, the Dockerfile looks like the following:

FROM python:3-slim

ENV INSTALL_PATH /open_event

RUN mkdir -p $INSTALL_PATH

WORKDIR $INSTALL_PATH

# apt-get update and update some packages

RUN apt-get update && apt-get install -y wget git ca-certificates curl && update-ca-certificates && apt-get clean -y

# install deps

RUN apt-get install -y --no-install-recommends build-essential python-dev libpq-dev libevent-dev libmagic-dev && apt-get clean -y

# copy just requirements

COPY requirements.txt requirements.txt

COPY requirements requirements

# install requirements

RUN pip install --no-cache-dir -r requirements.txt

RUN pip install eventlet

# copy remaining files

COPY . .

CMD bash scripts/docker_run.sh

These commands simply install the dependencies and set up the environment for our project. The final CMD command is for running our project, which, in our case, is a server.

After our Dockerfile is configured, I go to Google Cloud Platform’s console and create a new project:

Once I enter the product name and other details, I enable billing in order to use Google’s cloud resources. A credit card is required to set up a billing account, but Google doesn’t charge any money for that. Also, one of the perks of being a part of FOSSASIA was that I had about $3000 in Google Cloud credits! Once billing is enabled, I then enable the Container Engine API. It is required to support Kubernetes on Google Compute Engine. Next step is to install Google Cloud SDK. Once that is done, I run the following command to install Kubernetes CLI tool:

gcloud components install kubectl

Then I configure the Google Cloud Project Zone via the following command:

gcloud config set compute/zone us-west1-a

Now I will create a disk (for storing our code and data) as well as a temporary instance for formatting that disk:

gcloud compute disks create pg-data-disk --size 1GB

gcloud compute instances create pg-disk-formatter

gcloud compute instances attach-disk pg-disk-formatter --disk pg-data-disk

Once the disk is attached to our instance, I SSH into it and list the available disks:

gcloud compute ssh "pg-disk-formatter"

Now, ls the available disks:

ls /dev/disk/by-id

This will list multiple disks (as shown in the Terminal window below), but the one I want to format is “google-persistent-disk-1”.

Now I format that disk via the following command:

sudo mkfs.ext4 -F -E lazy_itable_init=0,lazy_journal_init=0,discard /dev/disk/by-id/google-persistent-disk-1

Finally, after the formatting is done, I exit the SSH session and detach the disk from the instance:

gcloud compute instances detach-disk pg-disk-formatter --disk pg-data-disk

Now I create a Kubernetes cluster and get its credentials (for later use) via gcloud CLI:

gcloud container clusters create opev-cluster

gcloud container clusters get-credentials opev-cluster

I can additionally bind our deployment to a domain name! If you already have a domain name, you can use the IP reserved below as an A record of your domain’s DNS Zone. Otherwise, get a free domain at freenom.com and do the same with that domain. This static external IP address is reserved in the following manner:

gcloud compute addresses create testip --region us-west1

It’ll be useful if I note down this IP for further reference. I now add this IP (and our domain name if I used one) in Kubernetes configuration files (for Open Event Server, these were specific files for services like nginx and PostgreSQL):

#

# Deployment of the API Server and celery worker

#

kind: Deployment

apiVersion: apps/v1beta1

metadata:

name: api-server

namespace: web

spec:

replicas: 1

template:

metadata:

labels:

app: api-server

spec:

volumes:

- name: data-store

emptyDir: {}

containers:

- name: api-server

image: eventyay/nextgen-api-server:latest

command: ["/bin/sh","-c"]

args: ["./kubernetes/run.sh"]

livenessProbe:

httpGet:

path: /health-check/

port: 8080

initialDelaySeconds: 30

timeoutSeconds: 3

ports:

- containerPort: 8080

protocol: TCP

envFrom:

- configMapRef:

name: api-server

env:

- name: C_FORCE_ROOT

value: 'true'

- name: PYTHONUNBUFFERED

value: 'TRUE'

- name: DEPLOYMENT

value: 'api'

- name: FORCE_SSL

value: 'yes'

volumeMounts:

- mountPath: /opev/open_event/static/uploads/

name: data-store

- name: celery

image: eventyay/nextgen-api-server:latest

command: ["/bin/sh","-c"]

args: ["./kubernetes/run.sh"]

envFrom:

- configMapRef:

name: api-server

env:

- name: C_FORCE_ROOT

value: 'true'

- name: DEPLOYMENT

value: 'celery'

volumeMounts:

- mountPath: /opev/open_event/static/uploads/

name: data-store

restartPolicy: Always

These files will look similar for your specific project as well. Finally, I run the deployment script provided for Open Event Server to deploy with defined configuration:

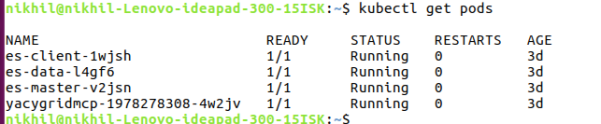

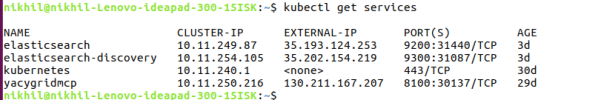

./kubernetes/deploy.sh create all

This is the specific deployment script for this project; for your app, this will be a bunch of simple kubectl create * commands specific to your app’s context. Once this script completes, our app is deployed! After a few minutes, I can see it live at the domain I provided above!

I can also see our project’s statistics and set many other attributes at Google Cloud Platform’s console:

And that’s it for this post! For more details on deploying Docker-based containers on Kubernetes clusters hosted by Google, visit Google Cloud Platform’s documentation.

Resources

- Open Event Server developers, FOSSASIA, GCE Kubernetes

- Docs for Kubernetes with GCE, Google, GCE Docs

You must be logged in to post a comment.